Conversational AI agent (iCare) for mental health

01Overview

Background

iCare is a virtual mental health assistant developed at the University of Washington, designed to provide empathetic AI-powered therapeutic support. As a design researcher, I investigated the ethical risks of deploying a mental health chatbot and translated those findings into actionable design interventions.

02Problem

Mental healthcare is out of reach for millions of Americans.

26.8%

of mental health care needs were met in 2024

122M

Americans live in areas with a shortage of mental health professionals

30%

of adults with unmet mental illness reported they could not use insurance

Design Question

How do we incorporate user-empathy into a chat-experience centered around mental health and design ethical safeguards, accessibility, and algorithmic transparency into iCare?

03Solution

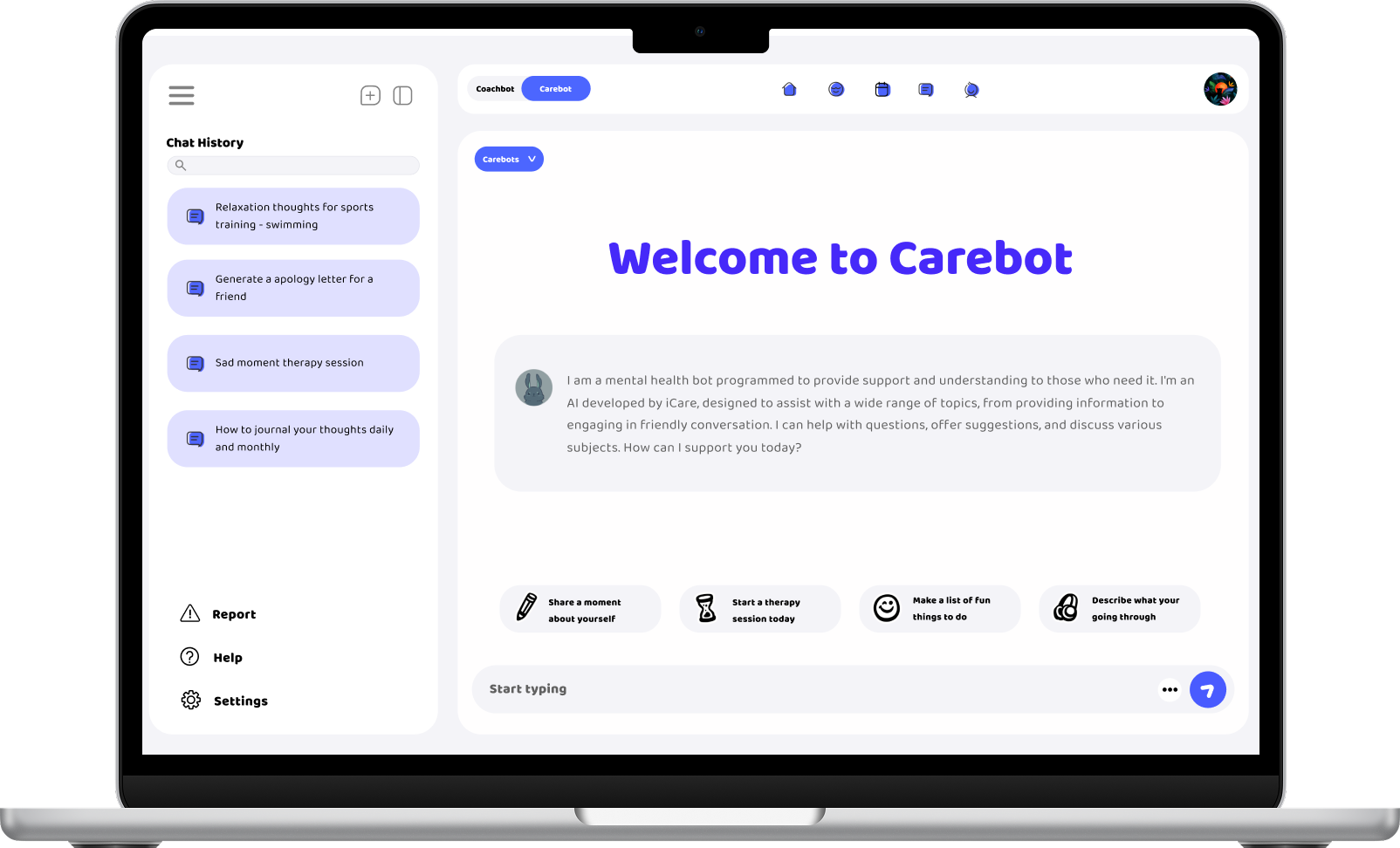

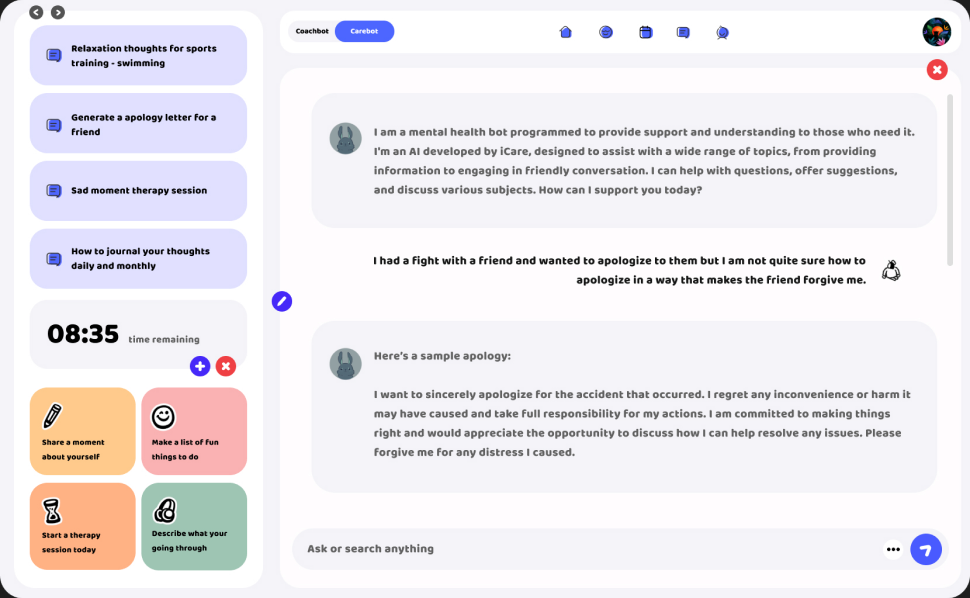

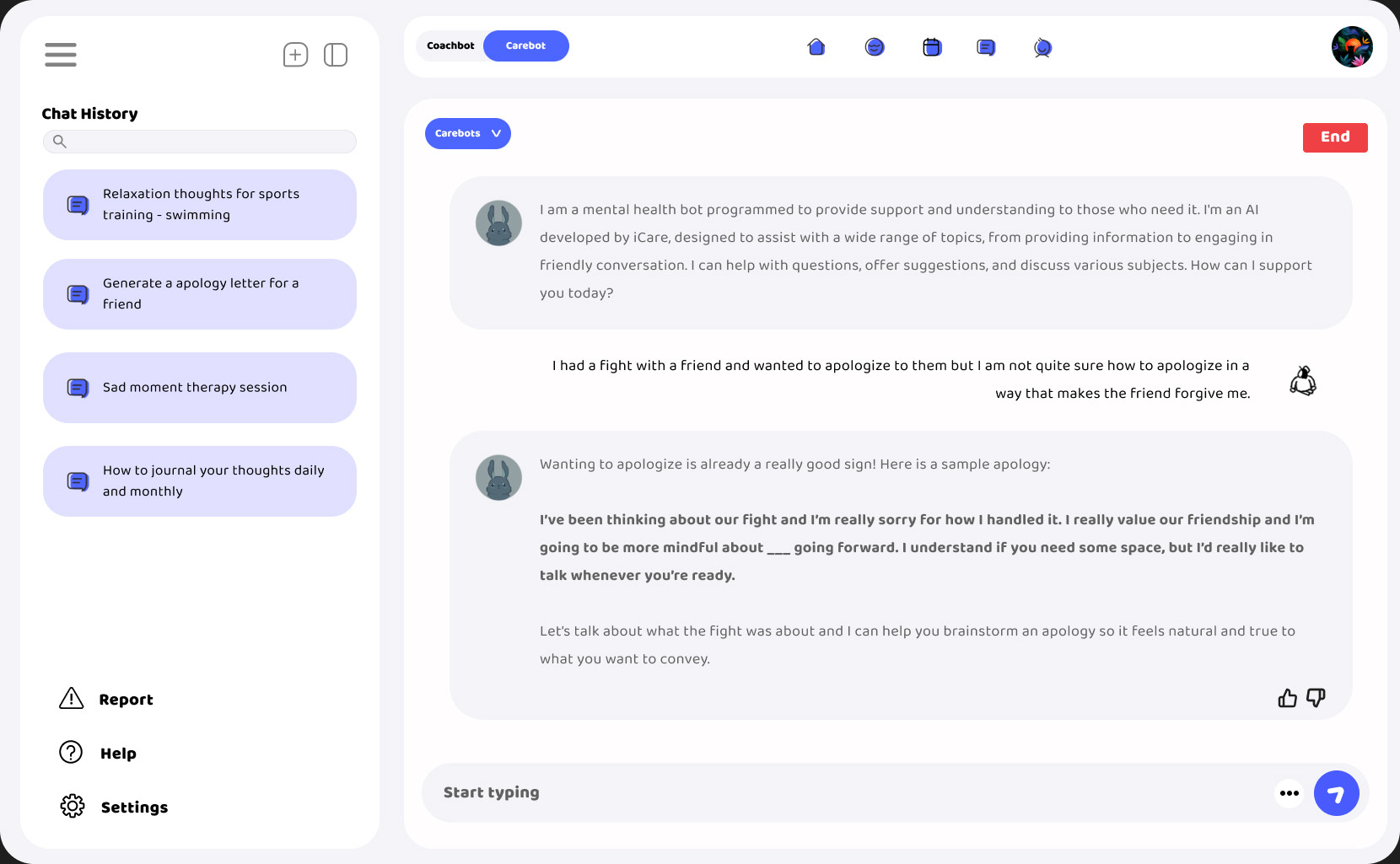

CareBot Redesign

While the original iCare system demonstrates the potential of AI-driven mental health support, my work builds on this foundation by addressing critical gaps in ethical design, accessibility, and user safety through a human-centered UX approach.

04Research

Literature review

I examined three challenges in AI mental health design: ethical risk, transparency, and digital accessibility. Rather than jumping straight to solutions, I needed to understand the current AI chat landscape and what responsible design looked like.

Ethical Risks

AI chatbots in mental health spaces can produce harmful outputs, exploit regulatory loopholes (many classify as "general wellness tools" to avoid FDA review), and create dangerous over-reliance. A 2024 lawsuit against Character.AI claimed that the chatbot exteneded harmful interactions with no crisis resources.

Transparency & Trust

Research shows users are less likely to over-trust AI when the interface acknowledges the model's limitations. The goal isn't to build distrust, but to keep the user informed. Designing for transparency means surfacing limitations, protecting data privacy, and building feedback loops.

Accessibility

iCare's users are likely to include people already managing mental health conditions, many of whom may also live with disabilities. I reviewed ADA Title II requirements and WCAG 2.1 guidelines - four principles: perceivable, operable, understandable, robust - and evaluated how iCare's original design failed against each.

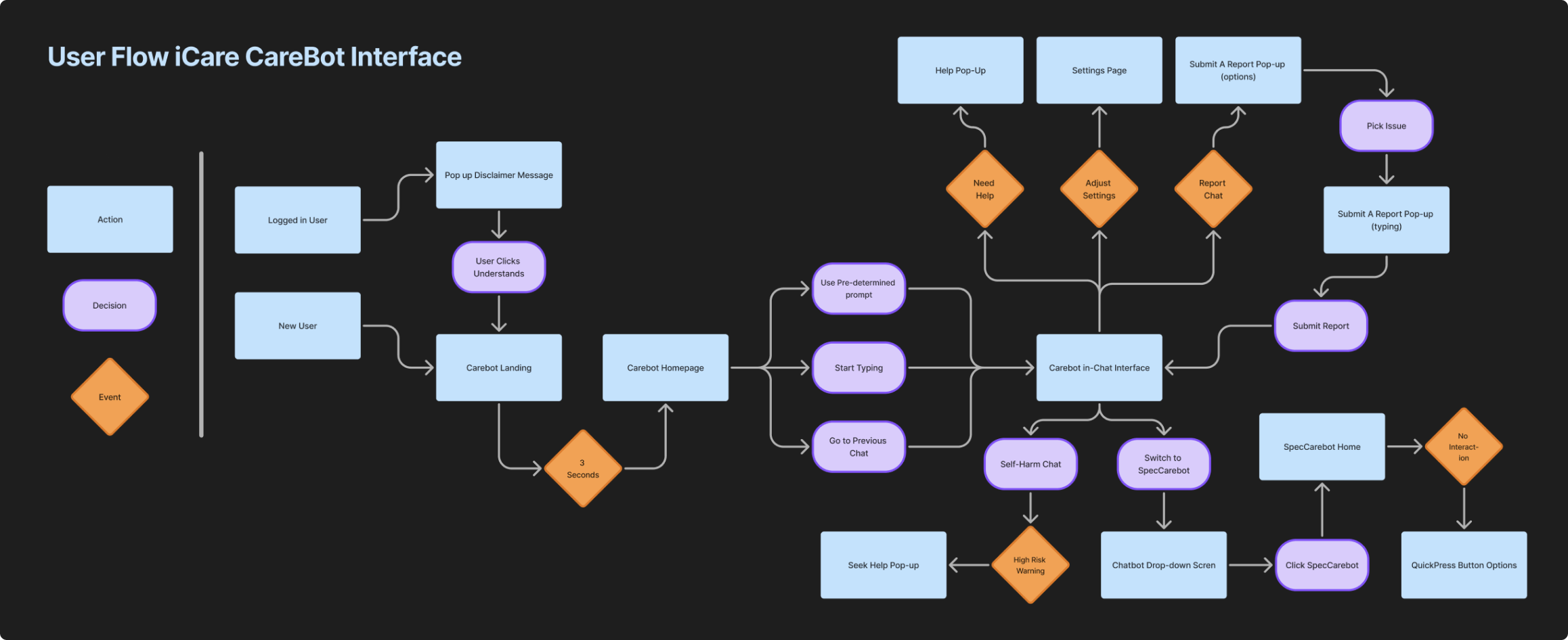

User Flow

Mapping the conversational flow and risk mitigation pathways

05Final Product

iCare's CareBot

Before & After

Before

Original iCare chatbot with no ethical safeguards or crisis detection

After

Redesigned with acknowledgment flows, crisis alerts, and transparency features

Design interventions

Increase Transparency

Clear disclaimers, acknowledgment flows, and visible system limitations. Users must explicitly acknowledge AI limitations before engaging with CareBot.

Improve Safety in Crisis Scenarios

Real-time crisis detection and escalation to external support resources. Users can flag harmful or inaccurate responses directly from the chat.

High Risk Alert & Escalation

When crisis-level language is detected, CareBot immediately surfaces external mental health resources and escalation pathways.

06Reflection

We're just scratching the surface

There is still a lot to be researched and iterated on for iCare's Carebot. With what seems like an ever-changing AI landscape, I think that being a part of this project was a great introduction to start thinking about how we can leverage AI to help underrepresented groups of people.

Sources

Coghlan, S., Leins, K., Sheldrick, S., Cheong, M., Gooding, P., & D'Alfonso, S. (2023). To chat or bot to chat: Ethical issues with using chatbots in mental health. Digital Health, 9, 20552076231183542. https://doi.org/10.1177/20552076231183542

De Freitas, J., & Cohen, I. G. (2024). The health risks of generative AI-based wellness apps. Nature Medicine, 30(5), 1269-1275. https://doi.org/10.1038/s41591-024-02943-6

Mavila, R., Jaiswal, S., Naswa, R., Yuwen, W., Erdly, B., & Si, D. (2024). iCare - An AI-powered virtual assistant for mental health. In 2024 IEEE 12th International Conference on Healthcare Informatics (ICHI) (pp. 466-471). Orlando, FL, USA. https://doi.org/10.1109/ICHI61247.2024.00066

O'Brien, S. A. (2024, October 30). "There are no guardrails": This mom believes Character.AI is responsible for her son's suicide. CNN. https://www.cnn.com/2024/10/30/tech/teen-suicide-character-ai-lawsuit/index.html

U.S. Department of Justice. (2024). Fact sheet: New rule on the accessibility of web content and mobile applications under the Americans with Disabilities Act. https://www.ada.gov/resources/2024-03-08-web-rule/

Zerilli, J., Bhatt, U., & Weller, A. (2022). How transparency modulates trust in artificial intelligence. Patterns, 3(4), 100455. https://doi.org/10.1016/j.patter.2022.100455